DVN : Can you tell us a little about how Whale Dynamic was founded and what the key areas of focus of the company are ?

I began my journey in MEMS research at Cambridge University, working on laser diodes and thin-film technology. After graduation, I joined Baidu’s Autonomous Driving Division. But in 2018, my co-founders and I saw a bigger challenge: making sensors work together with the kind of precision needed for safe autonomous driving.

We left Baidu to focus on LiDAR–camera fusion and precise synchronization and soon built a mapping vehicle to test our ideas. That work led to the creation of Whale Dynamic.

Today, Whale Dynamic goes one step further — building custom delivery vehicles that combine our mapping and perception technology. Our mission is to help partners deploy safe, scalable, and practical autonomous solutions worldwide.

DVN: How has the robot delivery market evolved in Shenzhen and China in general ?

In China, robo-delivery vehicles can be purchased for as little as $1,000, with an additional monthly platform subscription fee. Around a dozen serious players in this market have each deployed roughly 1,000 pilot units. However, the extremely low pricing makes it difficult to build a sustainable business in China, where these vehicles also face strong competition from low-cost human labor. Whale, by contrast, has focused on delivering a safety-oriented platform to partners in Japan, Singapore, and other international markets.

DVN: Are there services for revenue already running or when is this expected ?

So far, we have deployed pilot projects in Japan, Singapore, Abu Dhabi, the UK, India (where we delivered an Autonomous Driving Development Platform called the Drivable Testing Vehicle). These pilots operate on campuses or other closed environments and have not yet been conducted on public roads.

DVN: What is the advantage of a custom delivery vehicle versus a standard passenger car or van?

Our vehicles offer a highly competitive solution. They can generate around $150–$200 per day, achieving a payback in under a year, with lower maintenance costs compared to competitors like DoorDash.

DVN: How many sensors and what type of sensors does the vehicle use?

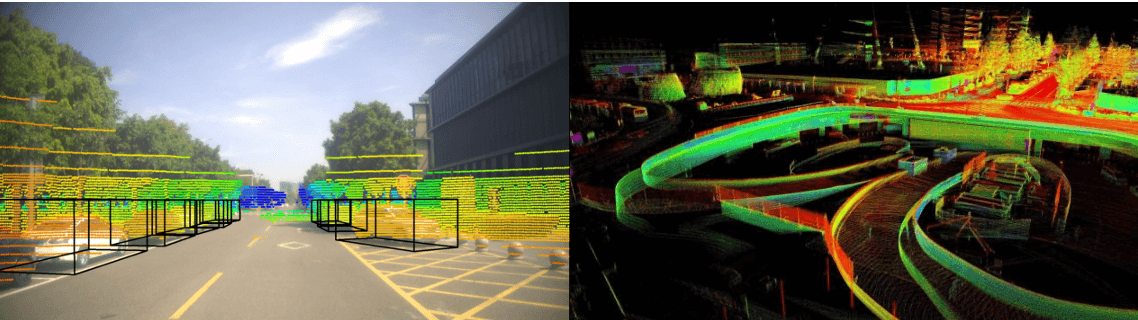

Whale relies on HD maps together with LiDAR and camera sensors. Our LiDAR suppliers include Robosense and Hesai, and the cost of these sensors has become highly competitive. We do not use radar. Since our operations are limited to restricted service areas such as campuses, the HD mapping process remains relatively low-cost, and we employ auto-labeling tools to efficiently train our perception stack.

Our approach is similar to Waymo’s, utilizing a 360° spinning LiDAR sensor along with two additional blind-spot LiDARs. This setup eliminates the need for frequent recalibration, which is often required when using multiple 120° FOV sensors.

DVN: Does the technology differ significantly from the robotaxi market ? Is speed (and hence sensor range) the main difference or are there other considerations ?

Our driving methodology is quite similar, but with a smaller ODD and without operating in severe weather conditions, which makes deployment easier. Our HD map–based approach is well-suited for campuses and other gated areas. However, generating HD maps for robotaxis becomes costly as service areas expand, while non–map-based approaches face significant challenges—particularly in China, where signage and driving rules vary greatly from region to region.

DVN: Are you using Nvidia hardware to run the driving software stack ? How do Chinese alternatives like Horizon Robotics compare ?

Yes, we use Nvidia servers for training on AWS and the Alibaba Cloud. We are using Orin processors in the vehicle and will migrate to Thor. We leverage Nvida’s tools and Cuda libraries which make it harder to migrate to alternatives like Qualcomm or Horizon. Those processors may be cheaper, but engineering costs will be higher.

DVN: Is the software stack perception + control or is it end-end AI ? What are the advantages of your approach ?

For now, perception and control are kept separate for safety reasons. This separation makes it easier to debug and correct actions if something goes wrong. We have experimented with end-to-end AI for driving, but efficiency comes at the expense of safety. Remote teleoperation is required in most deployments whenever a vehicle becomes stuck.

DVN: Is the software built on top of a third-party stack, for example Nvidia, Tier IV?

We use Autoware and Baidu’s Apollo platform with CyberRT middleware, while all core modules—from perception to localization—are developed in-house. Both platforms offer broad support for NVIDIA hardware.

DVN: What key partnerships did you build or plan to get to successful deployment of your technology?

Kudan in Japan has been both an investor and a key partner, while TIER IV is another important partner with whom we collaborate across multiple areas. In the US, we have partner who leverages our tools to collect and generate HD maps that integrate image and LiDAR data, delivering superior accuracy. We are also working with joint ventures in the US, Singapore, and Japan to deploy delivery services.