Ambarella SoCs for Gauzy Camera Monitoring System

Gauzy, a global supplier of light and vision control technology, has incorporated a CVflow system-on-chip (SoC) from AI semiconductor company Ambarella into the Gauzy Smart-Vision camera monitoring system (CMS), to enhance road safety for drivers of commercial vehicles. The Gauzy solution, based on Ambarella’s CV2FS SoC, is already operational in Ford Trucks.

CMS replaces traditional side and/or rearview reflective-glass mirrors with high-resolution cameras mounted around the vehicle. Also known as e-mirrors, these systems include a live video stream to interior displays, giving drivers a wider view of their surroundings and significantly reducing blind spots, thus improving overall safety and visibility on the road.

The Smart-Vision CMS, based on Ambarella’s CVflow AI accelerator, features self-learning and predictive capabilities. These include adaptive manoeuvre lines that are displayed while a vehicle is in motion and during trailer calibration to give drivers better visibility of their surroundings, as well as the ability to quickly analyse large amounts of data and imagery for significantly reduced latency and real-time visibility.

Moreover, the Smart-Vision system is programmed to automatically detect potential road hazards before they are encountered, greatly reducing the possibility of accidents or fatalities, according to the company. The system also provides clear lines of sight via high image quality, across a wide variety of weather or lighting conditions. The company says that the Smart-Vision CMS’s surveillance mode deters theft and vandalism, is compatible with various truck models and configurations, and can be easily integrated into existing fleets.

“It’s highly rewarding for us to be working so closely with Ambarella, a global leader in edge AI vision processing, to advance the functionality and quality of Smart-Vision,” stated Eyal Peso, CEO of Gauzy. “World-renowned brands like Ambarella trust Gauzy to utilize their proprietary technology to its full potential, and partner with us because of the success our products can have in saving lives and reducing costs. We recognized early on the monumental impact AI will have in redefining mobility and are proud to have already developed what we believe is the most sophisticated AI-powered ADAS solution on the market. We believe that enhancing our Smart-Vision system with Ambarella’s CVflow AI SoCs, which provide industry-leading AI performance per watt, provides OEMs with an innovative solution as part of their ongoing efforts to improve the safety of their fleets.”

Peso added, “Despite the tremendous advancements we have already made in ADAS, we are still at the beginning of the AI-ification of vision control. We will continue to innovate and improve the quality of our products, to retain our competitive edge and produce for our shareholders. We are energized by the strong demand for our Smart-Vision CMS and believe that we are well positioned to capitalize on the renewed emphasis on safety in urban and intercity transport.”

Among the biggest differentiators of Gauzy’s Smart-Vision system with Ambarella’s CVflow SoCs is its high-performance image processing capability. This is required for lowering the amount of latency experienced and activating vulnerable road user (VRU) detection, object classification, data recording and video streaming.

Ambarella’s CVflow SoC architecture enables Gauzy’s development teams to fine-tune various parameters, resulting in superior image quality and highly accurate color and contrast representation, which is essential for minimizing driver fatigue.

“Our partnership with Gauzy demonstrates the power of what’s possible when two forward-thinking organizations with similar values work together to tackle industry challenges,” commented Fermi Wang, president and CEO of Ambarella. “We were motivated to help Gauzy and its OEM customers address the millions of road traffic fatalities that are estimated to occur each year, and we believe the Smart-Vision CMS, with our CVflow AI SoCs, has the potential to drive this number lower. There is universal support for making our roadways safer, and we are pleased to help play a role in this effort through the application of our technology.”

The Smart-Vision CMS has passed strict homologations and certifications for safety, including UNECE R10, R46, R118, R151, and automotive cybersecurity standards including UNECE R155 and R156.

DVN comments

Gauzy’s Smart-Vision system, integrated with Ambarella’s CVflow AI system-on-chip (SoC), offers several specific benefits for vulnerable road users (VRUs) in urban environments. CMS replaces traditional rearview mirrors with high-resolution cameras, providing a wider view and significantly reducing blind spots. The system has predictive and self-learning capabilities, allowing for better visibility and rapid analysis of real-time data. This includes adaptive manoeuvring lines displayed during vehicle movement and trailer calibration, giving drivers better visibility of their surroundings.

TriEye High-Resolution SWIR Image Sensor Enters Production

TriEye is bringing SWIR to mass production with their Raven, the world’s first CMOS-based SWIR sensor. By solving fundamental constraints in manufacturing, TriEye’s unique sensor design provides high-resolution, low power consumption, small form factor, and a 99-per-cent price reduction compared to current InGaAs technology.

TriEye’s full-stack solution allows any machine vision system to operate and deliver image data and actionable information even under the most challenging visibility conditions. Leveraging our deep expertise in device physics, process design, electro-optics, and system engineering enables us to create SWIR systems that can support a wide range of mass-market applications.

The Raven TES200 is a 1.23 Megapixel CMOS-based SWIR Sensor that has a resolution of 1,236H × 960V pixels. It has a global shutter in a 2/3″ optical format that delivers a frame rate of 120 fps (12 bit), 150 fps (10 bit) and 180 fps (8 bit).

This SWIR sensor has a pixel pitch of 7 × 7 µm and wavelength range of 700 to 1,650 nm. It has a quantum efficiency of 60 per cent at 1,245 nm and full well capacity of 440,000 e-. This SWIR sensor has a conversion gain of 0.0064 DN/e- and dark current of 100 pA. It has 8/10/12-bit ADC with an imager size of 8.98 × 6.72 mm and optical cross talk of 0.5 to 2 per cent.

The Raven TES200 supports monochrome color filter and RAW-8, -10, and -12 output formats. It has horizontal & vertical binning or sub-sampling and configurable region of interest (ROI) & windowing. This SWIR sensor has a configurable 24-bit parallel interface (up to 1,25 MHz) and fast I2C (up to 1 Mbps) control interface. It has external frame synchronization and up to 8 general purpose outputs & triggers. This SWIR sensor has an integrated temperature monitor and 512 bit of OTP memory. It requires a DC supply voltage of 1.2 V or 3.3 V, and dissipates 500 mW of power.

DVN comments

TriEye’s sensor can be integrated into the SEDAR platform, which provides high-resolution, long-distance detection capabilities. Its robust performance across various environmental conditions, from bright sunlight to complete darkness, ensures consistent reliability. This adaptability aligns perfectly with the stringent requirements outlined in the new US AEB regulation, FMVSS 127, ensuring dependable operation in diverse scenarios.

IR Imager Merges AI, Thermal Physics

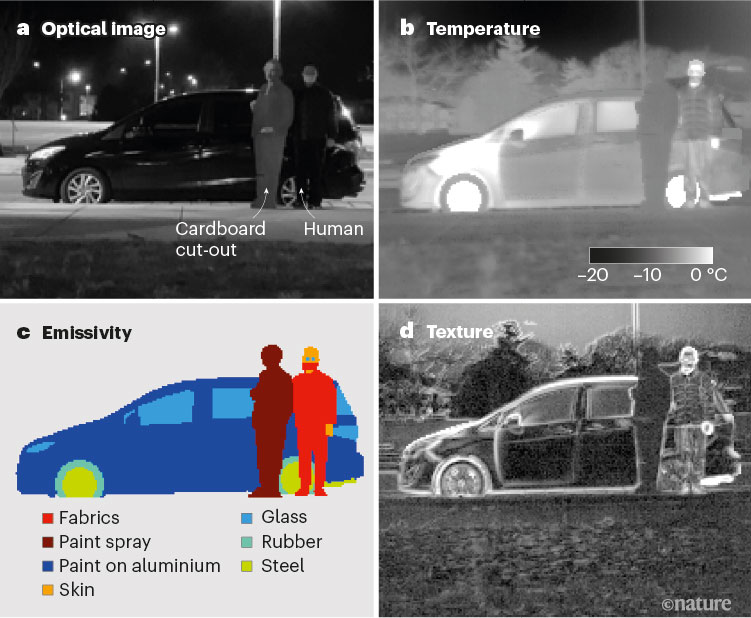

Heat-assisted detection and ranging (HADAR), a patent-pending thermal imaging technology from Purdue University, combines infrared (IR) imaging, machine learning, and thermal physics to visualize target objects in the dark as if it were broad daylight. According to its developers, the technology could have an impact on par with lidar, sonar, and radar, by enabling fully passive, physics-aware machine perception.

Traditional sensors that emit signals, such as lidar, radar, and sonar, can encounter signal interference and risks to eye safety when they are scaled up. “Each of these agents will collect information about its surrounding scene through advanced sensors to make decisions without human intervention,” Professor Zubin Jacob said. “However, simultaneous perception of the scene by numerous agents is fundamentally prohibitive.”

Video cameras designed to work in sunlight or with other sources of illumination are impractical for use in low-light conditions. Traditional thermal imaging can sense through darkness, inclement weather, and solar glare. However, ghosting — a thermal imaging effect that causes hazy images lacking in material specificity, depth, or texture — makes it difficult to use traditional thermal imaging for object detection.

“Objects and their environment constantly emit and scatter thermal radiation, leading to texture less images famously known as the ‘ghosting effect,’” researcher Fanglin Bao said. “Thermal pictures of a person’s face show only contours and some temperature contrast; there are no features, making it seem like you have seen a ghost. This loss of information, texture, and features is a roadblock for machine perception using heat radiation.”

Textnet deep neural network

Using a computational approach and machine learning, HADAR reconstructs the target’s temperature, emissivity, and texture (TeX), even in complete darkness. The TeX information is presented in the HSV color space to form the text view for the AI model known as Textnet.

Textnet is a deep neural network designed to perform inverse text decomposition. When provided with a hyperspectral cube of data, Textnet decomposes it into three maps: a temperature map, an emissivity map for materials in the materials library, and thermal lighting factors. Textnet is trained using a physics-based data reconstruction loss function and can be trained with direct supervision if the ground-truth TeX decomposition is available.

A significant challenge for the researchers was the limited availability of high-quality training data. However, the physics-based loss function allowed them to compensate for limited data and facilitate effective learning for Textnet. By disentangling information within the complex heat signal, HADAR operates effectively in total darkness as if it were daylight.

The team tested HADAR TeX vision using an off-road nighttime scene. HADAR ranging at night was found to outperform thermal ranging. In daylight, it showed an accuracy comparable with RGB. Automated HADAR thermography reached the Cramér-Rao bound on temperature accuracy, surpassing existing thermography techniques.

The researchers developed an estimation theory for HADAR and addressed photonic shot-noise limits depicting information-theoretic bounds to HADAR-based AI performance.

Additional enhancements to HADAR will include improving the size of the hardware and the data collection speed, the researchers said.

“The current sensor is large and heavy, since HADAR algorithms require many colours of invisible infrared radiation,” Bao said. “To apply it to self-driving cars or robots, we need to bring down the size and price while also making the cameras faster. The current sensor takes around one second to create one image, but for autonomous cars we need around 30- to 60-Hz frame rate, or frames per second.”

Initially, HADAR TeX vision will be used in automated vehicles and robots that interact with humans in complex environments. The technology could be further developed for agriculture, defence, geosciences, health care, and wildlife monitoring applications.

DVN comments

Traditional technologies like lidar, radar, and sonar can encounter signal interference and eye safety risks when amplified. HADAR overcomes these limitations by using a passive approach. HADAR technology combines infrared imaging, machine learning, and thermal physics to detect and localize objects. HADAR reconstructs the temperature, emissivity and texture of targets even in complete darkness, exceeding existing thermographic techniques in accuracy. The technology is initially intended for autonomous vehicles and robots but could be developed for other applications like traffic surveillance and security.