# Voyant Photonics showcased its new Carbon FMCW LiDAR sensor at CES 2025

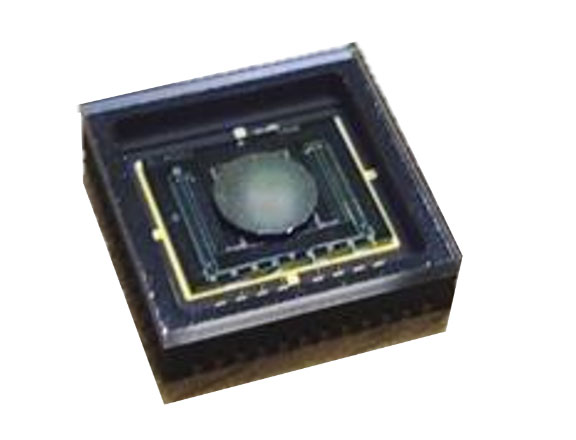

Aimed at industrial applications, Voyant Photonics demonstrated its frequency-modulated continuous-wave (FMCW) LiDAR sensor with highly accurate detection and tracking of moving objects up to 200 meters. The company integrated optics on a LiDAR photonic IC to achieve a low-cost 4D LiDAR sensor that it claims will revolutionize machine perception capabilities in industrial, robotics and security applications. This highly integrated, fingernail-sized silicon photonic chip provides high resolution and object detection up to 200 meters with a precision of <2 cm.

Named Carbon, the FMCW LiDAR sensor is powered by the LiDAR-on-a-chip with solid-state beam steering. The FMCW technology allows for instant velocity at each point in addition to traditional distance, reflectivity and intensity measurement. This provides 4D capability, delivering high-fidelity point-cloud data with high accuracy, as well as a real-time view of the environment up to 20× per second. The instant velocity enables vehicle ego positioning, which is extremely efficient in GPS-denied environments, the company said.

The Carbon LiDAR sensor is also a fraction of the cost of competing best-in-class LiDAR solutions, according to the company.

The compact LiDAR sensor weighs 250 grams. Key specifications include a high resolution of native 128 lines per frame for camera-level resolution, 1.3-cm range precision and a field of view of 45° vertical and 90° horizontal. The maximum detectable radial velocity is 63 meters/second (140 mph).

The sensor’s software-defined LiDAR allows for modifying the frame rate and adjusting the field of view during operation to focus on a zone of interest when and where it is needed, making any small object detectable and classifiable, the company said.

When compared with best-in-class ToF LiDAR, Voyant claims that the Carbon LiDAR sensor outperforms these solutions when operating through dust, fog, rain, snow and sunlight. The technology is also invulnerable to highly reflective objects including retroreflectors (street signs, traffic cones and safety vests).

The LiDAR sensor offers IP67 dust and water protection and strong shock and vibration endurance. The low power required by the FMCW laser technology ensures eye safety.

Carbon LiDAR anticipates a future TITANIUM version which would have the following specifications:

- 500 m range max range

- 200 m range @ 10%

- 1 cm range precision

- 0.6 cm/sec velocity precision

- Up to 120° Horizontal Field of View

- Up to 20° Vertical Field of View

- 0.045° vertical resolution

- Up to 2.86 million samples per second

DVN comments

Voyant also collaborates with NVIDIA Isaac Sim. This enhanced simulator provides an advantage for customers because it now enables the full modelling of Voyant’s FMCW LiDAR to deliver instantaneous measurements of both range and velocity for every data point. This aligns the capabilities of Voyant’s sensors for advanced 3D perception. This step enhances the accessibility of Voyant technology, allowing customers to independently validate and optimize performance within their specific applications.

# Kyocera Unveiled the World’s First Camera-LIDAR Fusion Sensor with Perfect Optical Alignment at CES 2025

Kyocera’s technology has a cutting-edge precision with unmatched laser irradiation density for parallax-free, long-distance obstacle detection ideal for Autonomous Driving.

Kyocera Corporation recently developed the Camera-LIDAR Fusion Sensor, the first LIDAR to align the optical axes of both the camera and LIDAR into one sensor. This design enables real-time acquisition of parallax-free superimposed data. It also features the highest laser irradiation density among LIDAR sensors, allowing for long-distance and high-precision object detection.

LIDAR is crucial for autonomous driving’s commercialization. It quickly captures long-range, accurate 3D data, detecting obstacles in complex environments and at high speeds with precision. LIDAR excels at spatial recognition, determining an object’s distance and size from reflected laser light over a wide area. Typically paired with cameras, it often faced delays due to sensor calibration issues. Kyocera’s new Camera-LIDAR Fusion Sensor combines the camera and LIDAR in one unit, providing parallax-free, real-time data integration for efficient and accurate results.

Key Features:

- Camera and LIDAR integration for most accurate object recognition

Kyocera has developed a technology to integrate the camera and LIDAR into a single unit with aligned optical axes. This integration allows for real-time combination of camera image data and LIDAR distance data, enhancing object recognition capabilities.

- High Resolution with World’s Highest2 Laser Irradiation Density

LIDAR can detect small obstacles over long distances by increasing laser beam density, enhancing resolution and accuracy. Kyocera’s sensor uses a 0.045-degree irradiation density with proprietary laser scan technology from MFPs and printers, enabling it to detect a 30cm object falling from 100m away.

- High Durability with Proprietary MEMS Mirror

In LIDAR, a MEMS mirror or motor is required to irradiate laser light over a wide and high-density area. However, MEMS mirrors typically have lower resolution and motors tend to wear out quickly. Kyocera’s new integrated sensor provides both higher resolution than motor-based systems and greater durability than conventional MEMS mirrors. A proprietary MEMS mirror, developed with Kyocera’s advanced manufacturing and ceramic package technologies, and high-resolution laser scanning technology, support high-precision sensing for various industries including autonomous vehicles, marine/ships, heavy machinery, and more.

Customization Options

Kyocera customizes solutions for various applications, offering total control from MEMS mirrors to software. This integrated sensor targets automotive and other fields like construction machinery, ships, robots, and security systems.

DVN comments

Aligning Lidar and Camera optically can be beneficial. The Camera provides high resolution for azimuth and elevation, while Lidar excels in depth resolution. Merging their data accelerates object segmentation and instantly calculates object speed using Lidar’s depth information. There can also be a synergy between these two sensors: Lidar can help to define the area in an image where the optimal sensitivity needs to be adjusted by the camera’s shutter. If the Lidar has a programmable scanning profile, the camera can assist in determining it through image analysis.

# Aeva Introduced Atlas Ultra, Its Slimmest High-Resolution Long-Range 4D LiDAR Sensor at CES 2025

Aeva®, a leader in next generation sensing and perception systems, announced Aeva Atlas™ Ultra, its newest 4D LiDAR sensor built to meet the performance demands of SAE Level 3 and 4 automated driving systems in passenger and commercial vehicles. To enable safe travel at highway speeds, Atlas Ultra provides up to three times the resolution of Atlas, and configurable field of views with up to 150 degrees of vision across the horizon. On-sensor perception software enables unique detection capabilities at a maximum detection range of up to 500 meters. A 35% slimmer design makes Atlas Ultra ideal for passenger cars in roofline and behind windshield integrations with minimal impact to vehicle styling and aerodynamics.

“Atlas Ultra is our most powerful LiDAR sensor designed for the performance needs of L3 and L4 highway driving,” said Mina Rezk, Co-Founder and CTO at Aeva. “In recent years, Aeva’s development of 4D LiDAR and our production programs with multiple top global automotive OEMs have led to the versatile Atlas Ultra product. We believe that Atlas Ultra, along with its on-sensor perception and localization features, offers advantages for passenger and commercial vehicle OEMs interested in highway speed functionality.”

Delivering Industry-leading Performance

Aeva’s FMCW 4D LiDAR technology detects velocity and position simultaneously, enhancing safety and automation. With instant velocity data, automated driving systems detect important objects faster and more accurately from greater distances.

Atlas Ultra meets highway-speed autonomous driving needs with a 250-meter range for low-reflectivity targets and up to 500 meters maximum range. It provides clear perception in various scenarios, immune to interference from sunlight, other LiDAR sensors, and retroreflective objects.

On-Sensor Perception Capabilities

Leveraging per point velocity information, Atlas Ultra provides perception and localization outputs directly from the sensor to enable unique capabilities, while helping to reduce compute costs. These capabilities include:

- Detection of Small Objects in Roadway: Identify small objects on the roadway and differentiate retroreflective lane markers from non-drivable road hazards, such as small bricks or tire fragments, at distances up to 150 meters.

- Dynamic Object Detection and Tracking: Detect and track the position and velocity of all dynamic objects with centimetre accuracy at up to twice the distance of conventional 3D time-of-flight LiDAR sensors.

- Late Reveal Dynamic Object Detection: Instantaneously detect dynamic objects that suddenly appear even while remaining partially obstructed, such as a pedestrian or animal emerging onto the road from behind a parked car or thick vegetation.

- Lane Lines & Drivable Region Detection: Detect Lane lines and determine drivable regions.

- Aeva Ultra Resolution™: A real-time camera-like image that provides a high-density point cloud resolution.

- Advanced Vehicle Motion Estimation: Estimate vehicle motion in real-time with six degrees of freedom for accurate positioning and navigation without the need for additional inertial or positioning sensors.

Advanced AI Perception Capabilities

Additional advanced perception functions harness the latest AI-based detection, tracking and classification algorithms. These advanced perception algorithms utilize per point velocity information to deliver advantages for automated vehicle systems.

- Pedestrian & Vehicle Detection: Detect, classify and track static and dynamic objects including vulnerable road users (VRUs) such as pedestrians, bicyclists, and motorcyclists, as well as a large range of vehicle classifications including passenger cars, trucks, buses, emergency vehicles and more.

- Semantic Segmentation: Per-point segmentation of the entire point cloud classifies points as: pedestrians, vehicles, lane markings, drivable regions, road signs, infrastructure, vegetation, and more.

Powered by Aeva Custom Silicon

The advancements in Atlas Ultra are powered by custom silicon including the Aeva CoreVision™ Lidar-on-Chip module, and Aeva X1™ System-on-Chip processor.

- Aeva CoreVision: Designed to strict automotive standards, Aeva’s fourth-generation LiDAR-on-Chip module incorporates all key LiDAR elements including transmitter, detector and an optical processing interface chip. Built on Aeva’s proprietary silicon photonics technology, CoreVision replaces complex optical fibre components with silicon photonics, ensuring quality and enabling mass production at affordable costs.

- Aeva X1: Aeva’s powerful FMCW LiDAR System-on-Chip processor seamlessly integrates data acquisition, point cloud processing, scanning system and application software into a single mixed-signal processing chip. Designed for dependability with automotive-grade functional safety and cybersecurity built in.

Meets Automotive Requirements

Designed to meet challenging OEM requirements across passenger and commercial vehicle applications, Atlas Ultra meets automotive grade requirements for functional safety ISO 26262 ASIL-B (D), cybersecurity ISO 21434, and is IP69K compliant for robust dust and water protection, and strong shock and vibration endurance.

DVN comments

FMCW lidar can quickly distinguish between fixed and moving points, enabling localization of ground and obstacles in the 3D image. The high distance and speed resolutions of each point in FMCW Lidar’s point cloud can enable a precise vehicle 3D odometry by analysing echoes from the ground and static objects. This may overlap with inertial sensing systems typically used in autonomous vehicles. With Aeva’s Lidar, ego motion tracking can continue during adhesion losses, even when vehicle speed is inaccessible due to wheel blockage.