Andras Palffy received the M.Sc. degree in computer science engineering from Pazmany Peter Catholic University, Budapest, in 2016, and the M.Sc. degree in digital signal and image processing from Cranfield University, U.K., in 2015.

From 2013 to 2017, he was an algorithm researcher at Eutecus, a US based startup (later acquired by Verizon) developing computer vision algorithms for traffic monitoring and driver assistance applications.

He obtained his Ph.D. degree in 2022 at Delft University of Technology, Delft, Netherlands, focusing on radar based vulnerable road user detection for automated driving.

In 2022 he co-founded Perciv AI, a machine perception startup developing AI-driven, next generation machine perception for radars.

DVN-1: Perciv.ai is a start-up created in 2022 in the Netherlands and has raised new funding end of 2024. Could you tell us the purpose of the company and where you are today?

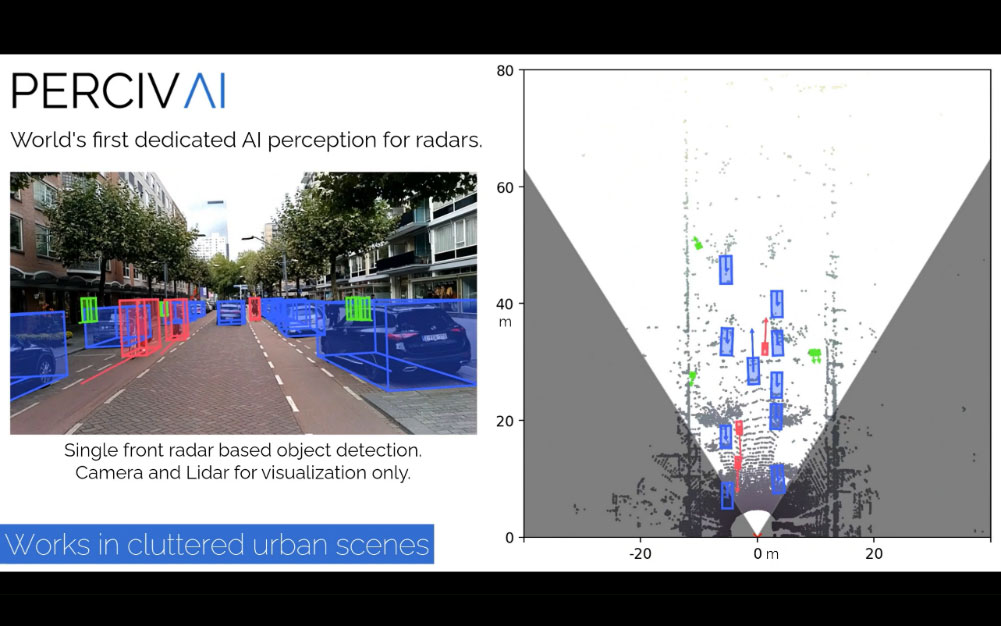

Perciv AI’s mission is to democratize automated systems and vehicles by helping them understand their environment in a weather robust and scalable way. To do this, Perciv AI develops AI-driven machine radar perception solutions. We believe that radars are not exploited to their limits, and just like cameras and LiDARs, they can be pushed beyond their traditional limitations with dedicated AI, and perform extremely well for a cheaper price – even in adverse weather conditions.

DVN-2: Which kind of product / software are you developing?

Our product is software development kit (SDK), that can be integrated into various host systems using different radar sensors and computation architectures. Our software has three main features:

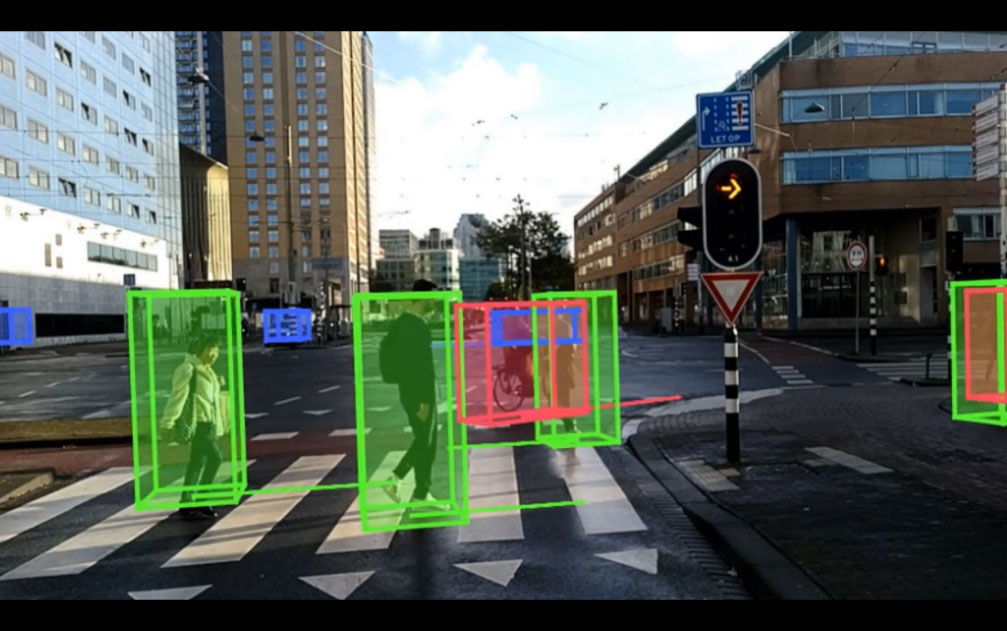

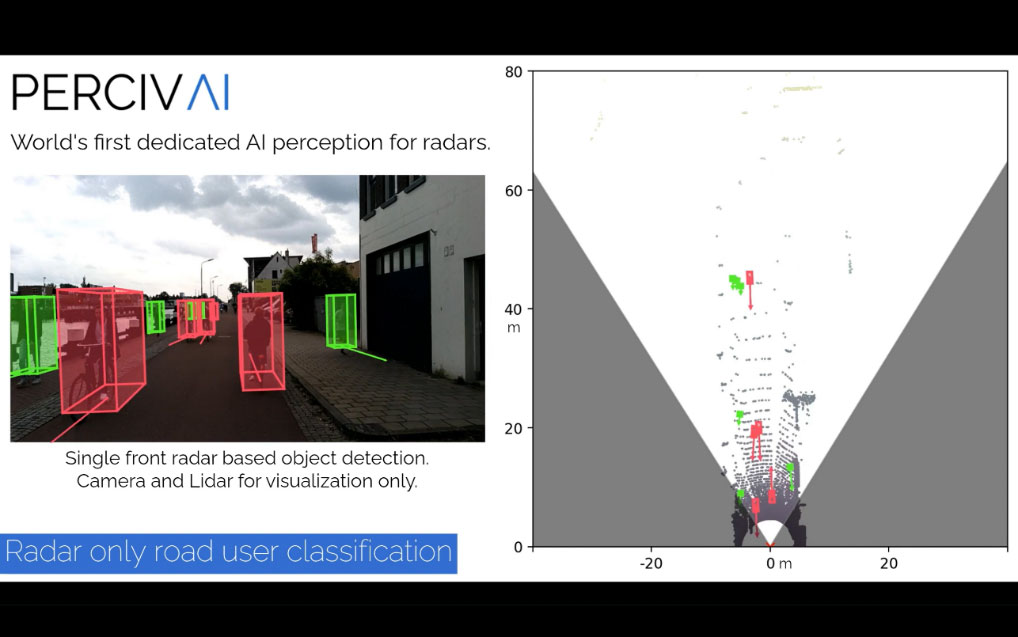

1. Perciv-Objects: A state-of-the-art, radar-only object detection, classification, and tracking module, providing an object list with unprecedented quality for radar systems. It can also be fine-tuned or retrained for specific objects of interest to the customer, handling classes that are often not addressed by conventional automotive radar perception software.

2. Perciv-ClearWay: Drivable Surface Estimation feature, estimates where the vehicle can drive regardless of the class of the obstacles, even if they are not classified (e.g., lost cargo, animals, or other objects for which we do not explicitly train). This feature can also be considered a generic collision avoidance feature.

3. Perciv-EgoTrack: Perciv-EgoTrack utilizes radar input to estimate vehicular movement, including location, orientation, and velocity. This technology is particularly valuable for localization in instances where GPS is unavailable, for example, in parking garages or urban canyons.

It is important to note that these features are not solely intended for traditional “on-highway” automotive applications. Our SDK addresses similar challenges across various operational environments, including indoor settings (e.g., logistic centers, forklifts), outdoor environments (e.g., delivery robots, utility vehicles), and offroad settings (e.g., construction and agricultural vehicles).

DVN-3: Which performance & KPIs will you improve? Which critical use cases will be solved

Perciv AI significantly outperforms current radar perception algorithms in classification (e.g., person versus cyclist versus car) and occupancy mapping, and often rivals the performance of lidar-based systems. Therefore, our technology could be a solution for any use case that requires weather-robust, privacy-safe, and, most importantly, cost-efficient 3D perception. A prime example of such a need is the upcoming automotive regulations in both North America and Europe, which require reliable pedestrian detection even at night—a feat not achievable with cameras or current radar perception algorithms. Original equipment manufacturers (OEMs) can decide whether to invest in lidar sensors, which drastically increase the price, or improve the radar perception stack, potentially by using Perciv AI’s solutions.

DVN-4: What is your competitive advantage compared to the existing radar suppliers?

We perceive our offering as an entirely new offering in the radar value chain. Unlike radar manufacturers, we do not sell the sensor hardware itself; in fact, our solution is highly hardware-agnostic and can function with multiple vendors’ devices. This means that hardware manufacturers currently struggling to make not just great sensors but also great software, have a chance to challenge market leaders by collaborating with Perciv AI.

DVN-5: NTHSA has recently published a new specification for Pedestrian AEB in dark conditions, more stringent than the EU or NCAP specifications. Could your Software bring a significant improvement of the current systems (cameras / radar)?

The new NTHSA regulations are indeed more stringent than most of the important specifications currently in place, and we believe that others will follow the trend they have just set.

As explained above, this poses a huge challenge for OEMs, as current camera and radar-based solutions will not suffice. We strongly believe that with our advanced AI-driven radar perception, however, the regulations could be met without introducing new, expensive sensors to the vehicle. We will release relevant recordings proving this later this year.

DVN-6: When will you have a mature product / Software for automotive applications, with a full validation on public roads. Will you use existing data and validate your software with a SIL process?

Depending on the exact features, scope, and geography, the definition of mature product varies. That being said, we are working hard towards multiple certifications in 2025 and plan to have deployed software first in L4 shuttles in 2026, and in on-road cars/trucks around 2028-2029.

DVN-7: Do you have contacts with potential customers, are they OEMs or Tiers1?

We have more than five advanced PoC projects with different automotive players, including European and Asian OEMs and Tier1-s. Beyond that, we have more than eight customers (robot OEMs) in the industrial domain. These are not potential customers, but currently customers of Perciv AI, recognising that necessity of dedicated AI for radar.

DVN-8: Have you been successful at CES 2025? Could you tell us more about the benefits of this event for a start-up like yours

Perciv AI’s CES 2025 was a great success. The booth with its two live demo, demonstrating our Radar Perception SDK, attracted significant attention, drawing in over 400 visitors throughout the event resulting in over 100 promising leads.

Furthermore, CES facilitated valuable interactions with existing clients, with more than 20 customer meetings taking place during the event. This is yet another indicator that CES is not just a consumer show anymore – it is in fact one of the biggest automotive shows in the world, and thus, very important for startups like Perciv AI.

The event also provided an opportunity for us to connect with our US clients – for some, it was the first face-to-face meeting with Perciv AI!

DVN-9: Could your product / software be used for other technologies like cameras, Lidars?

We use to say that we are not a radar company – we are a machine perception company, that beyond cameras and lidars, can also work with radars, which is a very unique capability currently. We often work with the other sensors as well either for ground truth generation of sensor fusion for example. Furthermore, we are in close contact with 4D lidar companies, as their output – a point cloud with x, y, z and velocity dimensions – are very similar to radar point clouds, and thus, our algorithms could work with it just as well. This means that Perciv AI could be one of the first AI perception providers for the upcoming trend of 4D lidars.

DVN-10: Do you think that Radar + Camera perception systems can outperform Lidars. In such case what are the main advantages of Radar + Camera fusion by comparison with Lidars?

We strongly believe that an advanced radar-camera fusion system can challenge a lidar based one in most applications, including, but not limited to automotive. the most straight-forward advantage is of course the significantly lower cost compared to (similarly performing) lidar sensors. However, that is not all – the fusion approach brings in redundancy in case of sensor failure, adds robustness against challenging weather conditions, and also provides information at a longer range.

DVN-11: Imaging Radars hardware becomes more and more complex to reach sufficient resolution requirements in azimuth and elevation. Do you think that distributed radars architectures could be a solution to reach such requirements?

This is a very hot topic currently in the radar world—at every conference, experts argue for and against dense arrays, sparse arrays, and distributed radar architectures.

We see some advantages and disadvantages for all. The distributed approach, in particular, is very attractive because of the increased angular resolution—a well-known weakness of current radar systems. On the other hand, it increases the price by requiring more sensor “heads” and highly accurate temporal and spatial calibration. Interestingly, for our technology, the hardware architecture is irrelevant—we take the best point cloud or radar cube data available from the sensor and perform the best possible perception on that data, regardless of whether it is from a dense array or distributed approach, for example.