Generative AI is being used for AV development for the last few years by a number of companies, but this introduces a whole new set of safety challenges that traditional methods cannot address.

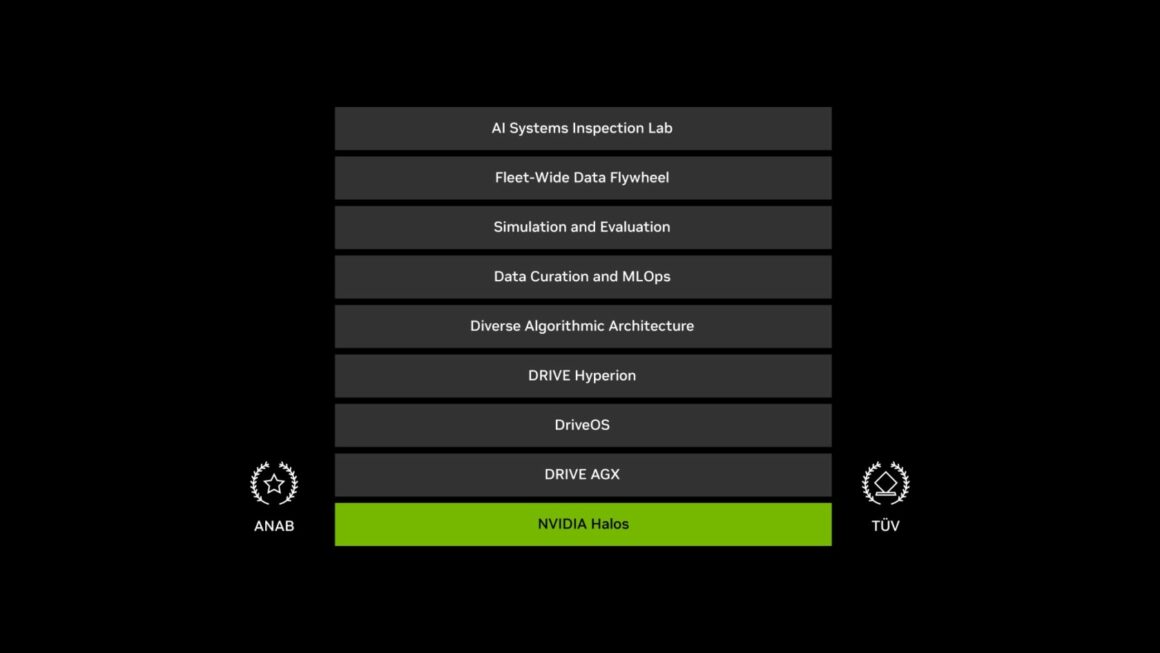

Nvidia Halos is a full stack safety framework for autonomous driving that includes hardware, software, algorithms, simulation environments and validation services. Nvidia has the world’s first AI Systems Inspection Lab where such systems can be validated and is certified under ISO/IEC 17020. It includes evaluation and validation for functional safety (ISO 26262) on Nvidia DRIVE AGX platforms. Safety for intended function (ISO 21448), cybersecurity safety (ISO 21434), AI functional safety (ISO PAS 8800, ISO/IEC TR 5469) and relevant UN regulations (UN-R 79, 13H, 152,155,157 and 171).

Customers and partners can use the lab to validate sensors, compute modules and other elements of the autonomous driving solution.

Nvidia has spent over 15K engineering hours on vehicle safety and filed more than 1000 patents. Compliance can be simplified and streamlined by using pre-certified components and tools.

The Halos platform includes the Nvidia SoCs that have built in safety mechanisms( like ECC checks on the memory, health monitoring and other features) to ensure reliable execution. On top of that is the DriveOS™ operating system that is safety certified and runs on the DRIVE AGX hardware platforms which also includes redundancy for safe operation.

Halos software libraries include APIs for safety data creation, curation and reconstruction that can be used for training AI (foundation) models and interface to simulation and validation environments. On top of that there are mechanisms to capture data from a fleet of vehicles and finally the AI Systems Inspection Lab that partners can use for testing, validation and certification.

One of the other key elements needed with an end-end autonomous driving AI model is a separate safety monitor that provides a separate set of algorithms and paths to avoid collisions. For full redundancy, this often runs on a separate asymmetric processor or at least involves a separate safety island.

Some OEMs continue to develop their own AI models and have invested in their own development and validation environments, but this has become very expensive, especially with the advent of generative AI.

Tesla just disbanded its DoJo supercomputer team and we see more and more OEMs partnering with Nvidia to not just use the AV hardware, but also the complete development environment. Simulation, test and validation is a key part of the solution that it might not make sense to try and duplicate but rather just leverage the work that Nvidia has already done here.

There are other alternatives in the market for AV software development and simulation, for example see the Applied Intuition story below, but safety validation and certification will remain a big part of the effort to deploy such systems in autonomous vehicles.