# Bright Way Vision gated camera has been tested along the recent AI-SEE project

VISDOM camera is a market-ready CMOS-based imaging system that enhances the safety and visual perception of driver-assisted and autonomous vehicles. Powered by Bright Way Vision’s proprietary Gated Vision technology, VISDOM has been tested and proven fully operational under stress-test weather and lighting conditions: fog, rain, snow, darkness, and glare blindness.

Designed for AI recognition system, VISDOM’s algorithm is also the only one that uniquely alerts for untrained objects, with a DB set of over 100,000 classified, annotated, road-related objects.

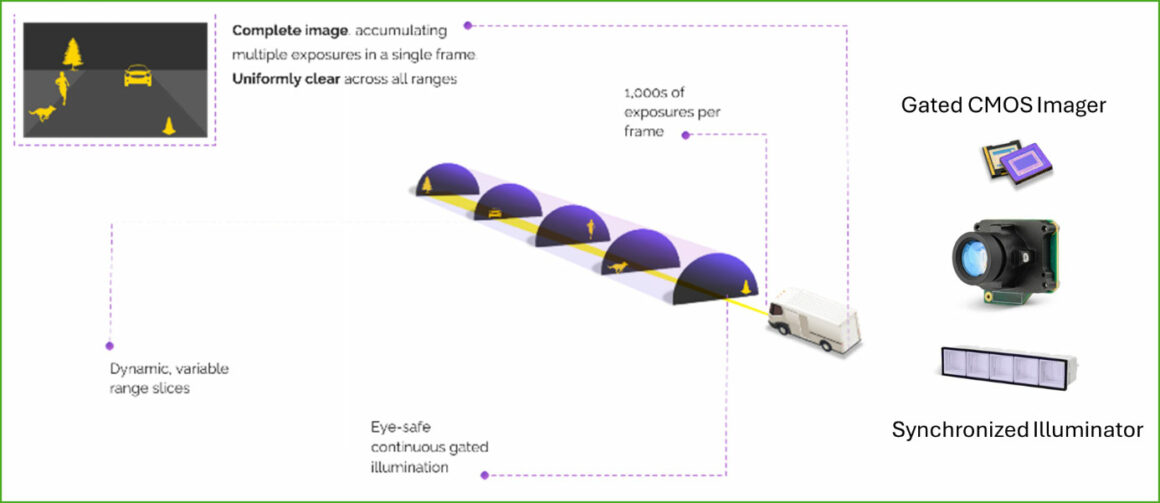

Bright Way’s Gated Vision principles

Gated Vision uses eye-safe NIR illumination pulses to generate ‘slices’ of the space ahead, while thousands of synchronized micro exposures of the gated camera build a complete illuminated image of the road ahead. Two crucial elements are involved in this gated vision system:

- A gated NIR CMOS imager integrated in VISDOM camera, generates an image with the right amount of illumination in every range without affecting other ranges and collects data from predetermined distances (slices) ahead of the vehicle while completely ignoring interferences along the way, such as rain, fog, mist, snow, and backscatter interference. When combined with the standard day camera already in every vehicle suite, the Gated Vision camera system fulfils the vehicle perception requirements to see clearly night and day, and in any weather.

- An illuminator technology based on a vertical cavity surface emitting laser (VCSEL) device that generates the required illumination power. For that it was necessary to develop a high-power VCSEL device that could pulse thousands of times per frame while being driven by a sophisticated laser driver.

These key components have been designed to comply with industry standards from the earliest design phase, in accordance with the relevant AECQ 100 or 102 and ISO26262 standards.

Aside from providing perfect images in any weather condition, detecting reflective objects, and providing distance estimates, gated imaging technology can also range the image using the inherent slice feature of the gated technology. By utilizing slicing techniques, the gated imaging camera system can provide high-quality images and an accurate depth map in any weather, day or night. The resulting image stream can be fed into any machine vision processing pipe and used in conjunction with detection algorithms with minimal effort and adjustments.

Bright Way Vision Gated cameras have been involved in the last AI-SEE project.

AI-SEE is a PENTA EURIPIDES² funded project. PENTA and EURIPIDES² are Eureka Network Clusters, operated by AENEAS promoting the generation of innovative, industry driven, pre-competitive R&D projects around Smart Electronic Systems.

- Project duration: 43 months, 1st June 2021 – 31st December 2024

- Total costs: €20 million

- Funding from national public authorities (PENTA EURIPIDES²) label funding: €10 million

- Coordinator: Mercedes-Benz AG

- Partners: Mercedes-Benz AG, Patria Land Oy, Magna Sweden AB, Robert Bosch GmbH, Brightway Vision Ltd, TORC Europe, TORC Canada, Meluta Oy, Unikie Oy, VTT Technical Research Centre of Finland Ltd, ANSYS Germany GmbH, AVL List GmbH, University of Ulm – Institute of Applied Photonics and Optics, Fifty2 Technology GmbH, BASEMARK, Oy, ams-OSRAM AG, OQmented GmbH, University of Stuttgart – Institute of Semiconductor Engineering, Technical University Ingolstadt – CARISSMA, Institute of Automated Driving, AstaZero AB

AI-SEE Project scope:

To meet safety standards, Automated Driving Systems must, at a minimum, continuously assess weather and road conditions to determine when automated driving can safely operate. In cases where weather or visibility challenges become too severe, the system must promptly alert the driver to take control, ensuring safety while building trust in these advanced technologies. Recognizing the essential role of a safety-critical weather detection system, the AI-SEE project achieved progress in developing a robust multi layered perception system. By integrating high-resolution sensors, adaptive AI algorithms, and comprehensive simulation and testing environments, the project partners developed solutions that enable vehicles to navigate adverse conditions with improved resilience.

Combining hardware and AI advancements, the project focused on the following five objectives:

- High resolution adaptive all-weather sensor suite with novel sensors.

- AI platform for predictive detection of prevailing environmental conditions including signal enhancement and sensor adaptation.

- Smart Fusion to create a 24/365 adaptive all-weather robust perception system.

- Novel simulation path which realistically simulates adverse weather near the sensor to adapt and test the system on both real and artificially generated road scenes.

- System validation plan and driving test campaigns.

In particular, the driving testing campaigns in controlled environments and on public roads showcased the robustness of the AI-SEE all-weather multi-sensor perception system and its capability to operate in diverse lighting and weather conditions.

One of the key outcomes of the AI-SEE project is the novel sensing system’s ability to detect and identify obstacles, including small hazards – up to 200 meters away, even in low visibility and adverse weather conditions. “This is a giant step towards SAE L3 market deployment, and enhances the safety of existing ADAS systems”, says AI-SEE Coordinator Dr. Werner Ritter, Mercedes-Benz AG.

The project’s key findings and results were showcased during the Final Event held at AstaZero, a project partner with the world’s first full-scale independent test environment for future road safety. The event featured high-level presentations from AI-SEE partners, an exhibition, and two live demonstrations that highlighted the project’s innovations.

DVN comments

Bright Way’s camera operates like a Flash Lidar but with over 2-megapixel resolution. It uses gated photodetectors and traditional deep learning algorithms for object detection, determining range by the closest slice where this object is detected. Due to the sensitivity of CMOS pixels and a brief exposure time, very high pulsed power is needed while maintaining globally class 1 eye safety to achieve adequate distance.

# Stradvision showcased its SVNet 3D Perception Network at CES 2025

Stradvision in South Korea recently announced the use of the latest TDA4 silicon from Texas Instruments for a Level 2 domain controller and signed an agreement to run its software on AMD’s FPGAs. The Stradvision SVNet 3D Perception Network still utilizes the TI TDA4VPE-Q1 system-on-a-chip (SoC) in a deep learning-powered ADAS and autonomous driving system.

This system supports various imaging solutions, including Level 2 and Level 2+ ADAS, automatic valet parking, 3D surround view, and more, making it a versatile and cost-effective choice for future automotive applications.

The TDA4VPE integrates advanced sensor fusion, edge AI, graphics, and video co-processing. It features 16 TOPS of AI performance, four ARM Cortex-A72 cores, optimized memory architecture, and a heterogeneous design for high efficiency while reducing system costs.

Using this chip, the SVNet 3D Perception Network converts 2D camera data into accurate 3D environmental maps, enabling vehicles to perceive their surroundings clearly. It supports high-level autonomous driving across various Operational Design Domains (ODD), including complex conditions.

The system is demonstrated at CES 2025 in Las Vegas, showcasing multi-camera inputs for advanced driver assistance systems (ADAS) like Level 2+ highway driving, auto valet parking, 3D surround view, and memory-based automatic parking.

The agreement with AMD marks the first instance of porting the 3D Perception SVNet onto the Versal AI Edge series devices. At CES 2025, Stradvision and AMD presented a joint technology demonstration with an 8MP front-facing camera using the AI inference engines in the FPGA.

“Our collaboration with AMD combines real-time processing technology with our SVnet advanced 3D perception network, setting new standards for performance, reliability, and scalability in automated driving systems,” said Philip Vidal, chief business officer of Stradvision.

“Stradvision is advancing autonomous vehicle technologies by making ADAS more accessible and affordable without sacrificing capability,” added Wayne Lyons, Senior Director of Marketing, Automotive Segment at AMD.

“We are collaborating with Texas Instruments to deliver cost-effective yet powerful solutions to the automotive industry,” Vidal stated. “The TDA4VPE-Q1 automotive SoC, paired with SVNet, exemplifies our commitment to advancing ADAS technologies. With production-ready software development concluding in 2025 and a Start of Production (SoP) targeted for 2026, we aim to meet market demands. This partnership also highlights our dedication to global expansion, addressing the increasing need for innovative and scalable solutions worldwide.”

“The TDA4VPE-Q1 automotive SoC for L2 domain controllers, featuring graphics, AI, and video co-processing, represents our vision of providing high-performance, flexible, and efficient solutions for next-generation automotive applications,” said Mike Pienovi, product line manager at Texas Instruments. “Our work with Stradvision and their SVNet software illustrates how technology can transition from 2D to 3D perception networks.”

Additionally, Stradvision has recently signed a master license agreement with Renesas Electronics to integrate the SVNet software with the R-Car system-on-chip platform as part of the Renesas RoX SDV development platform.

STRADVISION and its software have achieved TISAX’s AL3 standard for information security management, as well as being certified to the ISO 9001:2015 for Quality Management Systems and ISO 26262 for Automotive Functional Safety.

DVN comments

Founded in 2014, STRADVISION is an automotive industry pioneer in artificial intelligence-based vision perception technology for ADAS. STRADVISION’s SVNet is being deployed on various vehicle models in partnership with different OEMs in Germany and Japan. This company provides services for ADAS and autonomous vehicles globally and has a workforce of over 300 employees in Seoul, San Jose, Detroit, Tokyo, Shanghai, and Dusseldorf. STRADVISION will be participating in our upcoming DVN Workshop in Detroit on April 10, 2025.